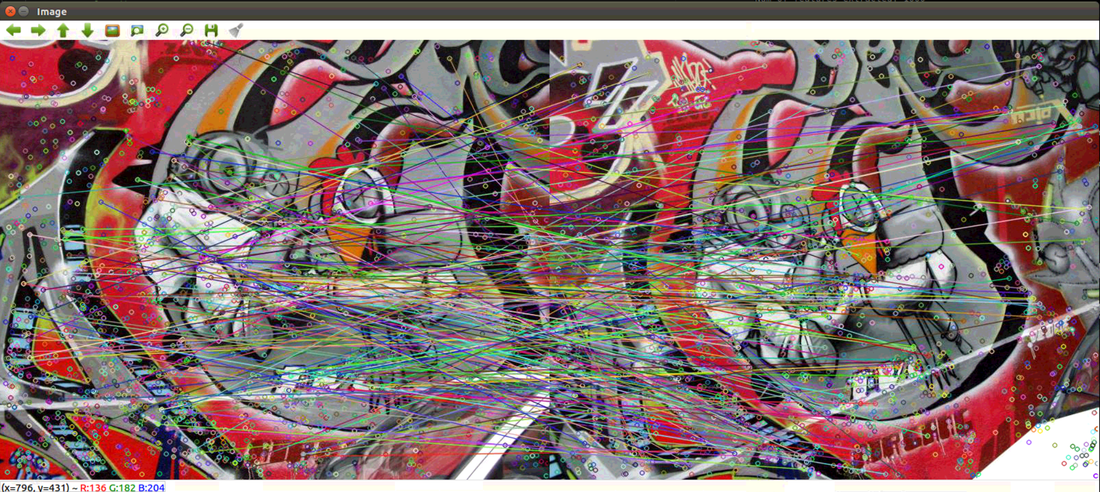

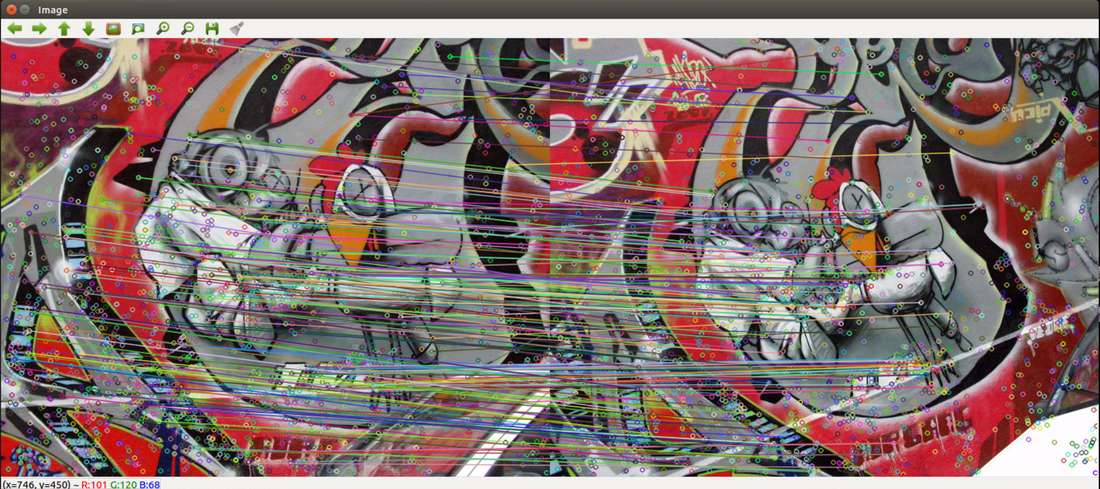

Part I: Technical difficulty connecting via TeamviewerCould not connect to lab computer via TeamViewer multiple times this week, but it is eventually fixed with Zach by following instructions on this page: https://www.thewindowsclub.com/teamviewer-stuck-on-initializing-display-parameters Part II: DebuggingAs suggested by Professor Tron, the k-means centers were manually set to the mean vector of all features for node0, and set to negative values for all the other nodes. During debugging, it has been verified that all the features would go to node0 so that I could compare my matching result to the result of the centralized version. Bug 1: After numerous print statements, I found that the bandwidth calculated in 'calc_density' function is incorrect. The function was outputting [0 1 2 3 4 ... 2999] instead of the correct bandwidth. When implementing the function, I changed some code to deal with another error I encountered, and I forgot to change them back after the previous debugging. After I changed the code back to match the centralized version, the function outputted the correct bandwidth. Bug 2: In order to calculate matches, we have to keep track of which image a particular feature belongs to. There was an mistake in the implementation: I was keeping track of which node a feature belongs to, instead of which image. This bug resulted in all the features belong to the same cluster in the 'break_merge_tree' function, since all the features belong to node0 in my debugging process. After correction, all the image numbers from 0 to 5 appeared (since there are 6 images) in 'break_merge_trees". Bug 3: This mistake is similar to bug 2. In the 'features_to_Dmatch' function, I compared indices with the node numbers instead of image numbers for each feature. It took me a long time to find it, but after I corrected it, the number of matches the algorithm found is exactly the same as the number of matches the centralized algorithms found (Figure 1 & 2). Suspected Bug 4: (This is unresolved! Help!) After correcting bug 3, we can see that our matches are now correct. However, the matches drawn in the images do not look the same as ones in the centralized version (Figure 3 & 4). I believe this is due to 'quickmatch_node.py' re-calculating keypoints of the images for graphing. In the centralized version, the keypoints were calculated during feature extraction, and the same keypoints were used for graphing in the 'features_to_Dmatch' function. However, I was unable to use the same keypoints recorded in the feature extraction, because my feature extraction function and my 'features_to_Dmatch'/graphing function are in two different python files ('node.py' and 'quickmatch_node.py' respectively). As a result, I computed the keypoints again using the built-in `cv2.xfeatures2d.SIFT_create` function and `sift.detectAndCompute` function. I have discovered that the built-in feature extraction function does not output the same keypoints every time. For the centralized code, when I print out the keypoints, then results from one run would be different from results form another run. Although the keypoints were not used in match calculations, they were used for graphing. I tried to publish the keypoints obtained during feature extraction 'node.py', so 'quickmatch_node.py' can get the same keypoints for graphing. However, they are of the type <cv2.KeyPoint>, and ROS does not support publishing a cv2 object. I highly suspect that this is reason why the graphs are not showing up correctly even though the matches are already correct. Figure 3: Correct graphing of distributed version on the same image. Figure 4: Incorrect graphing results of the distributed version (above) and correct graphing results of the centralized version (below). Next steps:

0 Comments

Leave a Reply. |

Archives

May 2020

Categories |

RSS Feed

RSS Feed